Deshredding tool

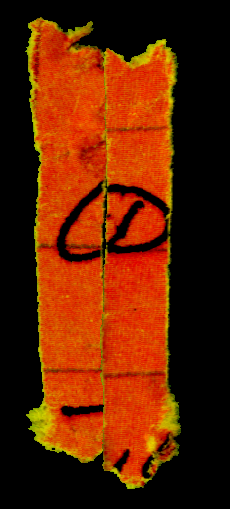

I’ve been playing with the DAPRA deshredding challenge. The challenge is to reconstruct the original text of a series of shredded pages (like the one shown above). That image is the easiest challenge, the fragments get progressively smaller, and the problem gets progressively harder. Computationally it seems rather tough! I wanted to post my work in progress and some details of the issues you encountered.

First of all, my current code base is here: https://github.com/new299/DS. There are a number of tools, all written and C++ and I’m afraid very badly and quickly hacked together.

ds_isolate: This tool isolates individual fragments from the image (image segmentation). It also tries to get all fragments in the same orientation. You can see an example output in the directory puzzle1

ds_orientate: This tool tries to get the fragments facing the right way. I.e. all “pointing” up.

ds_clean: This tool attempts create binary versions of the fragments, dividing the individual fragments into foreground/background pixels. It also create “edge_walk” files. These are walks around the edge of the fragment which contains 0 for a background pixel and 1 for a foreground pixel.

ds_align: This tool tries to align all the edge_walks. This is hard!

ds_qc: This is a SDL based tool for moving around and plaything with the fragments. It can also perform some alignment using the edge_walk files on the fly.

I built all this on a mac. If you want to do the same, you’ll need to install libtiff and SDL.

So, here’s my method in a little more detail:

ds_isolate – image segmentation

The segmentation progress is pretty straight forward, they’ve given us relatively nice clean images to play with. To segment I pick start pixels 10 pixel in from the edges of all corners. I then perform a random walk, only moving to pixels with a near RGB value. The distance function is simple euclidean distance.

I choose a limit of “10” because that seemed to work, pretty good enough. During the random walk I collect all the pixel I encounter and stick them in a vector. I let the random walks continue for 50,000,000 (that’s probably WAY more than required). I then take this set of pixels and expand it a little, adding all pixels within a difference of 10 in R,G,B space (quick and dirty).

I then remove (set to 0) all the pixels which have a value in that dataset. Once this is done I then extract all connected pixels that are non-0. Any fragments that are too small (say 20pixels), I throw out.

I think the segmentation there is pretty clean. On the right you can see a dark artifact, those artifacts are not always present, but are common enough to be annoying.

Now the images are segmented the individual fragments are stored in a directory as follows: OUTPUTDIR/fragment_N/original.tif

ds_orientate – Get the fragments in the correct orientation

You can see that the fragment above isn’t pointing straight up. I decided that it would make down stream processing much easier if all the fragments were in the same orientation. This is what ds_orientate does. The tool rotates the images, in increments of 0.01 radians. After each rotation it checks the width of the image. After all rotates we select the rotation which resulted in the minimal width. Because all the fragments are /kind of/ rectangular this should get them all “standing up”. However they could still be rotated by 180 degrees. So I then check if the “pointy” end is at the bottom, if it isn’t I rotate the image by 180 degrees.

Here’s the rotated image:

Again, this works pretty well. It breaks with some fragments, particularly those with deformed ends or those that are completely bent.

ds_clean – Cleaning fragments and extracting edge walks

This is where things start to get tricky. This tool attempts to divide the fragment in to foreground and background components. It takes the fragments and converts them to greyscale images, and simple thresholding function is them applied. I also have a function that specifically tries to repair linear features (the lines on the paper). However as you can see here, it hasn’t done a great job with the lines.

After thresholding the image, ds_clean performs edge walks along the edges of the fragment. It identifies the top left/right and bottom left/right corners. It then performs a pixel walk along the edge and produces a string e.g.

00000000000000000000000000000000000000000000000000111111111100000000000000000000000000000000000111111100000000000000000000000

Where 1s represent where the tool found a foreground pixel, 0s a background pixel. It writes those strings to files in the directory structure called “edge_walk”.

ds_align – Attempt to align all the fragment edges

ds_align takes all the edge_walk files and attempts to align the left and right sides against each other. It takes every overlap of each pair of strings and performs a weighted edit distance between them. It’s weighted so that foreground pixels count for more than background pixels. This results is a huge matrix of “what aligned best” for each fragment.

A work in progress

So, that’s where I got to so far. The alignment process works in /some/ cases, but isn’t great. I think perhaps I need to take fragment contours into account. However, I’m not sure how much more time I can devote to this project. Perhaps if someone out there wants to team up?? 🙂

A case that causes problems

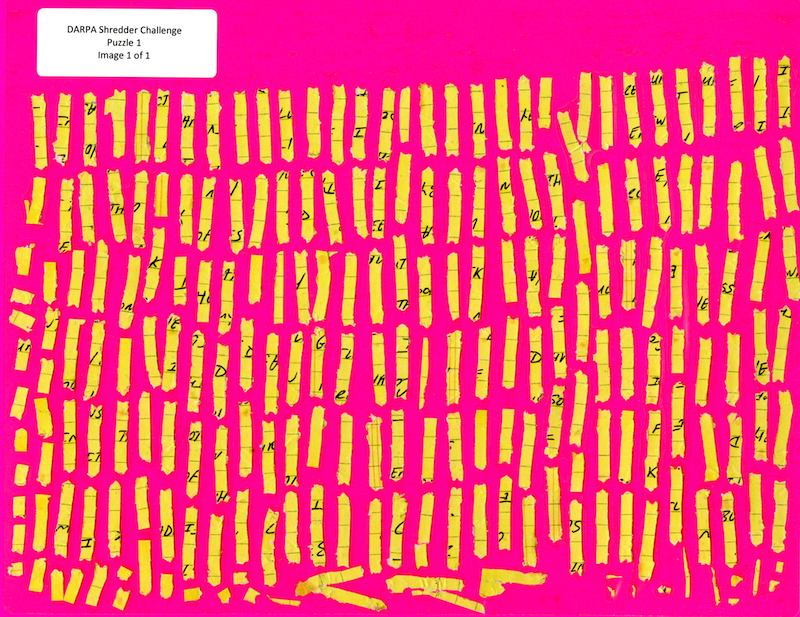

This is the top hit for one of the fragments. It aligns really well because almost the whole edge is touching, and the black parts match on the top character!

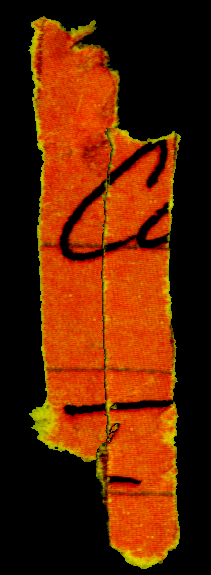

This is the correct alignment, but my code has it as the second hit. Thing like this will be a problem and are caused by the fact that the shredder doesn’t keep all the fragments in alignment (which is great from the shredder makers point of view of course). But because less of the edge is touching, it gets scored lower. Even though a human observer and see that this is the correct match.

In this case I could possibly solve the problem by up weighting the foreground pixel scores. But the general case maybe more difficult. And I’m wondering if more about the shape of the character, and the direction of the lines near the edge of the fragment needs to be taken in to account.

I have just read the blog post and not looked into the code. From what I understood, you are trying to align merely by seeing if the foreground and background of the two edges match along the edges. I would have wanted to match the angle at which the glyphs intersect with the edge taken into account as well. To find the angle you can probably look , maybe, 10(and tweak this depending on the results) pixels inside from the edge of a chad, and use that and the pixel right on the edge to calculate the angle apporximately. A match would be when the angle on two chads match, in addition to edge matching (as you are doing right now).

I ain’t very good at coding and hence not being able to implement anything. I am still stuck at orienting the images. I am using MATLAB.

Hope this helps!

Hi,

I like the problem and wanna work on it.

I couldn’t be able to compile it although i did what u said.

Could u explain how to compile a bit more.

Also do u have any tools that u said “hacked badly and quickly”.

Thanks,

nice!

on could try to generate a vector of the outline of the characters around the edge of the snippets and rate those snippets higher that share a similar vector.